Monitoring server health by clock speed variations

Back in 2003 when I set up my "new" old computer server, I wanted to make sure that its time was set correctly all the time. This sort of thing is normally done with the NTP (Network Time Protocol) daemon.But that would have been too easy, and I wanted even less traffic than I assumed NTP would cause, and all I cared about was to have my computer within a few seconds of actual time at all times. So I set up a "cron job" that would, at an odd time of night, sync the local clock with a time server via the command "ntpdate". This command, interestingly, indicates how much of an adjustment is actually made when its run. I decided to log this to a file to see how my server was doing.

Getting ready to upgrade this server to newer hardware, I, out of curiosity, graphed the daily adjustments versus time. There was quite a few missing data points, due to NTP not working at random times, for reasons I did not investigate. There was also large "spikes" in the graph for the times that my computer was rebooted and set its initial time to the CMOS clock, rather than keeping it off the main crystal.

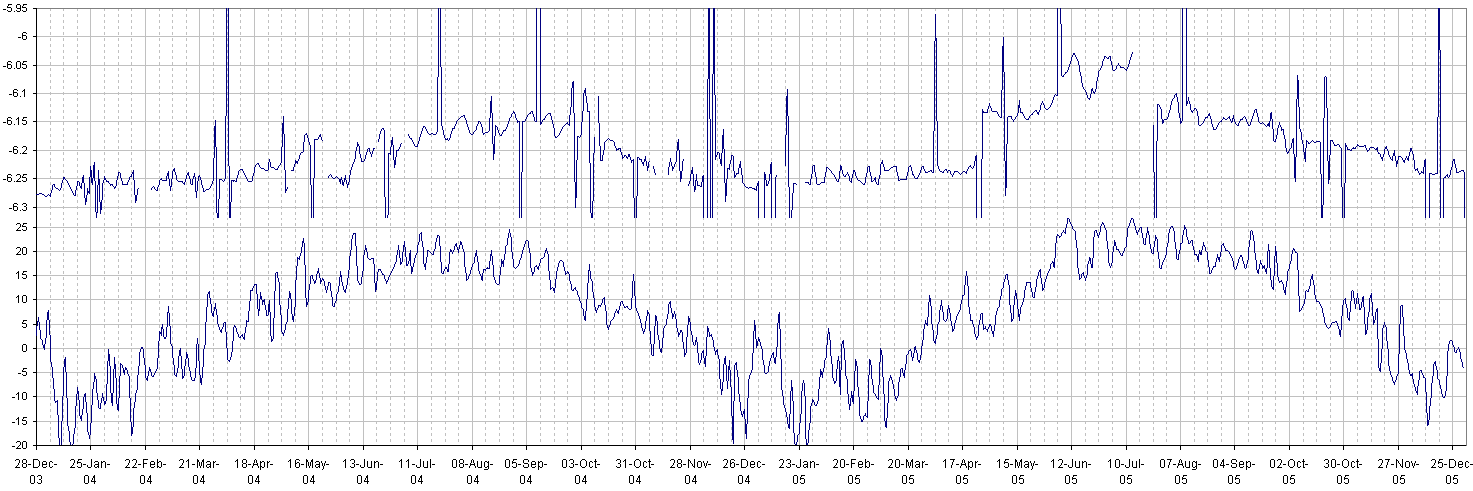

There were various interesting features on this graph, including seasonal variations. So I graphed this together with daily average temperature from the local weather station.

Daily clock adjust and average daily temperature vs. date, 2004-2005

Removing the data points for after a reboot, there are still residual smaller spikes, which may have been caused by network delays. I can see the graph go up and down in approximate trends. Given the seasonal nature, I was pretty sure this was caused by changes in temperature. Comparing this to outside temperature I can see that at least for the summer, temperature changes are reflected in daily clock adjustments with a bit of lag. I keep the server in the basement, so the temperature variations that it experiences are only perhaps a third of the temperature variation outside. For the winter, there is little correlation because the furnace regulates the inside temperature. But, seeing that I don't have air conditioning, outside temperatures do reflect temperatures in my basement during the summer.

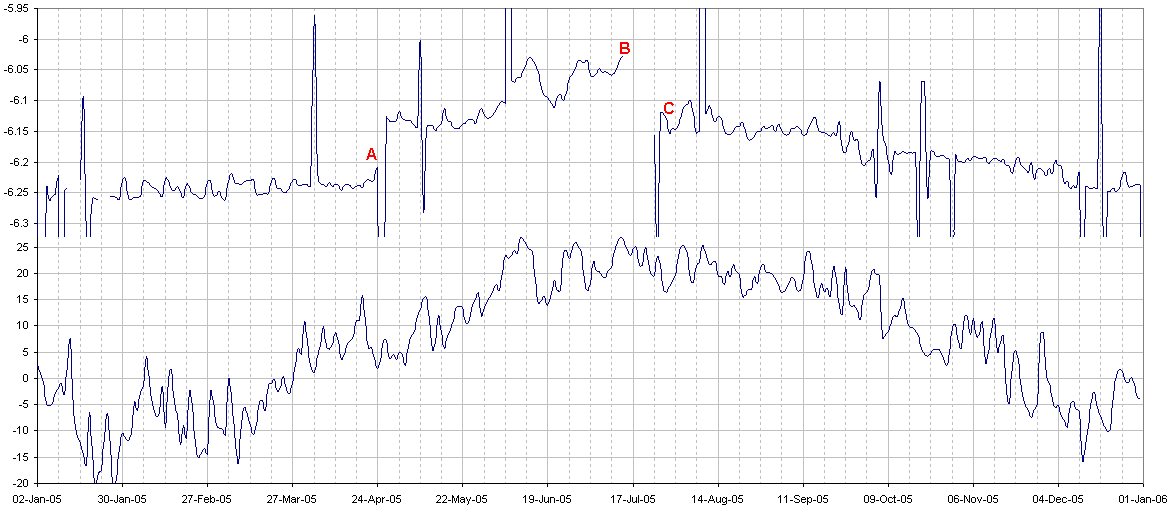

Even more interesting was a discontinuity in the daily adjustment amount after the 24'th of April (A), and before and after July 17'th of 2005 (B, C). See notations on graph of 2005 below:

Daily clock adjust and average daily temperature vs. date in 2005

So I looked into what could have caused that change. It turned out that on April 25'th 2005 (A), I replaced the power supply in the computer with another old power supply with a somewhat quieter variable speed fan. This power supply made a difference in cpu speed, of about 0.1 seconds per day, or around 1 part per million. I assume this was caused either by a slight change in supply voltage, or by reduced airflow from the quieter fan.

While I was travelling in Europe three months later, my server ended up dying of a power supply failure (B). When I got back, I put the old power supply back in on July 24'th (C), causing another change in CPU speed, back to the characteristics of the original power supply.

Also interesting is that the power supply failed during a heat wave. Looking at the heat wave from around Jun 10'th, we can see that this was reflected in the server CPU speed with maybe two days of lag. So probably, the computer got as warm as it ever got when the power supply failed. High temperature probably was what put the quieter power supply over the edge.

So what can I conclude from this? Well, CPU speed does show interesting variations, and may give clues as to why a computer may have failed, especially after the fact. I think this sort of thing might be interesting if you have a server that you keep offsite to monitor whether something may be up with it without adding extra hardware. I figure that sort of thing might be useful if you are somebody like Google, with thousands of cheap computers that fail on a regular basis. Also, if temperature can indeed drive a system over the edge, this might be usefulfor testing servers at times where failure is more acceptable.

As for me, its an interesting curiosity worth sharing.

Back to Matthias Wandel's home page